Cortical Labs built a biological computer called CL1 with 200,000 living human neurons. An independent developer wired those neurons into a local LLM. The cells now influence which tokens the AI generates. It challenges everything we assume about AI hardware.

The CL1 sells for $35,000 and runs neurons in a nutrient solution. Cortical Labs taught it to play Pong and DOOM. The encoder project takes their API and connects biological firing patterns to token generation. No GPU cluster required for the core compute substrate.

What CL1 LLM Encoder Does

This experimental interface bridges wetware and software. The system reads spontaneous neural activity from the CL1 biological computer. It converts firing patterns into token probability distributions. The LLM then generates output influenced by living cells instead of pure silicon math.

Local processing runs the entire pipeline on your machine. No cloud dependency means full experimental control. The code is open source for researchers to modify and extend.

Technical Architecture

The pipeline has four main components. The CL1 substrate holds the neurons. The Neural Activity API exposes real-time firing data. The Token Encoding Layer maps spikes to vocabulary probabilities. The LLM generates output shaped by biological input.

Neural activity continuously adjusts token selection during generation. The influence happens in real-time throughout the output sequence. This is not a one-time seed but ongoing biological participation.

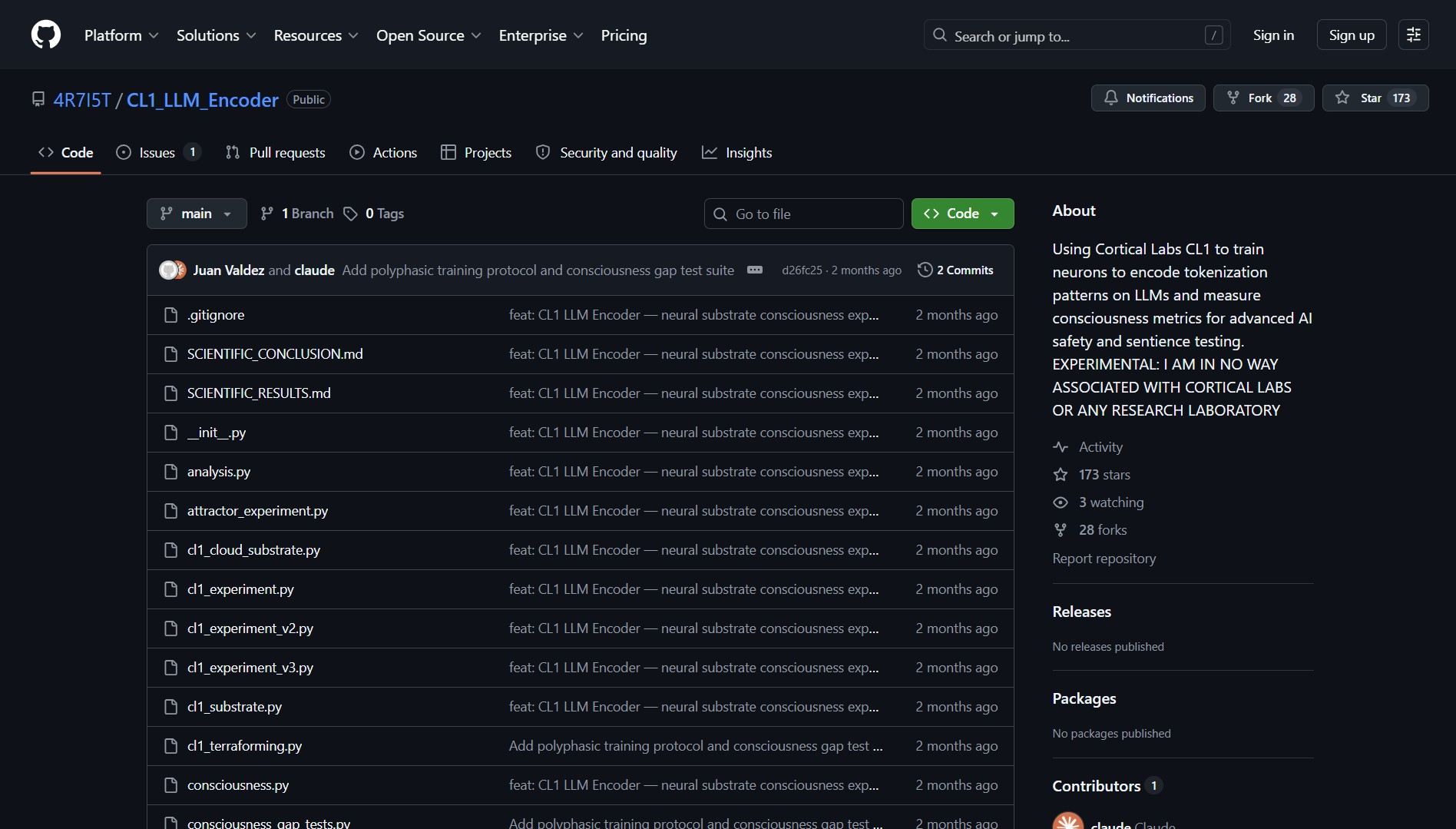

Project Link

Project link:

https://github.com/4R7I5T/CL1_LLM_Encoder

The Catch

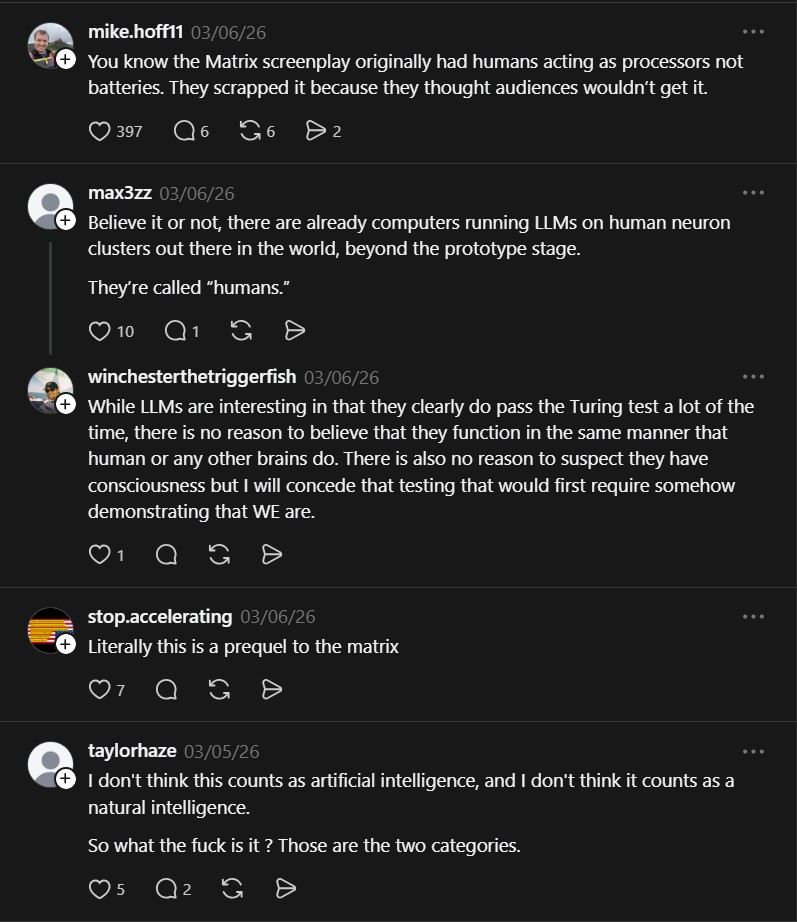

This is research code not production tooling. The CL1 costs $35,000 and needs biological maintenance. The interface demonstrates a concept rather than replacing GPU inference. Some argue the neurons influence rather than truly compute. The philosophical implications may outweigh practical applications today.

- Awesome LLM Apps, Curated Open-Source LLM Projects

I ran into this curated collection while following agentic experiments. The repo pulls together practical LLM apps across RAG, AI..