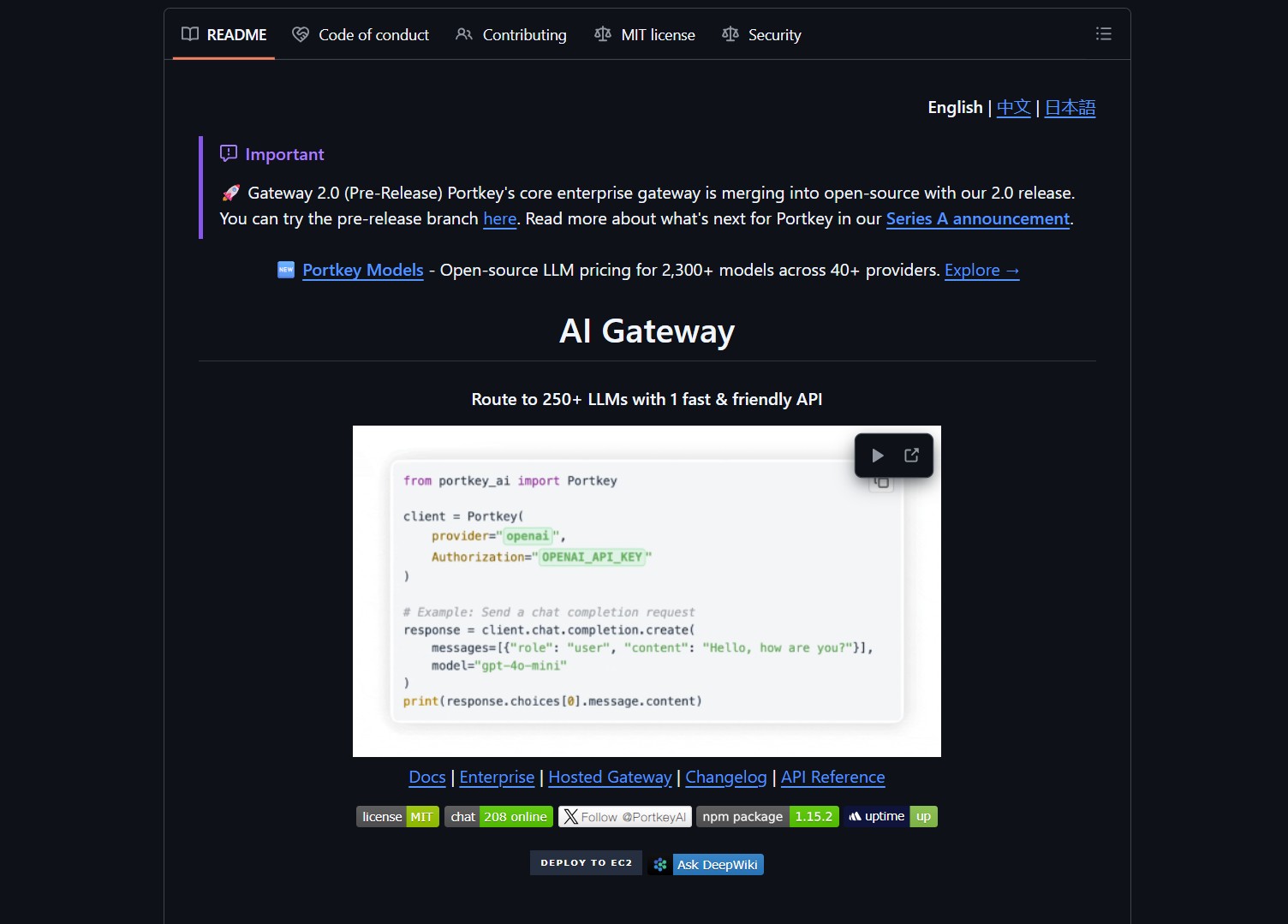

Portkey AI Gateway is a unified routing layer for 1600+ AI models. It provides a consistent interface across multiple providers. This reduces integration complexity for developers and teams. You can switch models without changing application code. The gateway handles load balancing, fallback strategies, and usage analytics.

The platform supports language, vision, audio, and image models. It offers enterprise-grade features like rate limiting and caching. Deployment options include Docker, Cloudflare Workers, and self-hosted setups. The lightweight footprint of 122 KB ensures minimal overhead. Portkey processes over 10 billion tokens daily in production.

Key Features and Capabilities

Portkey delivers sub‑1ms latency for model routing requests. It integrates with 1600+ models through a single OpenAI‑compatible API. The system includes intelligent load balancing based on cost and performance. Automatic failover ensures high availability when models are unavailable. Detailed analytics provide token usage, costs, and performance metrics.

Security features include API key management and request validation. A plugin system allows custom middleware and extensions. Multi‑region support lets you deploy close to your users. The gateway is open source under the MIT license. Enterprise users can deploy on‑premise or in hybrid environments.

Customer Persona

This tool is for enterprises using multiple AI models across different tasks. Teams that need to compare model performance and costs will benefit, similar to those using LangChain for scalable AI agents. Startups avoid vendor lock‑in by abstracting model providers. Developer teams reduce integration time from days to minutes. AI platform builders create applications that require model flexibility.

DevOps engineers manage API keys and usage across teams. Organizations with strict security and compliance requirements use Portkey. Businesses building marketplaces that connect to multiple AI providers adopt it. Individuals prototyping across different models appreciate the unified interface. Any developer serious about AI integration needs this abstraction layer.

Project link:

https://github.com/portkey-ai/gateway

How to Deploy and How It Works

Deploy Portkey with Docker using a single command: docker run -d -p 8787:8787 -e PORTKEY_API_KEY=your_key --name portkey-gateway portkeyai/gateway:latest. For Cloudflare Workers, use the Wrangler CLI with a configuration file. The setup takes less than two minutes per model.

After deployment, configure routing policies via the web dashboard or API. Define fallback sequences for when primary models are unavailable. Set up rate limits per user or application. Enable caching to reduce costs for repeated queries. Monitor token consumption and latency through built‑in analytics.

Integration with existing applications requires changing only the API endpoint. Update your OpenAI client library to point to Portkey’s gateway URL. Provide your Portkey API key instead of individual provider keys. The system handles authentication and routing transparently. You can now route requests across 1600+ models with a single interface.

Market Analysis

The AI gateway space includes alternatives like Cortex, Lunar, and Tonic. Portkey distinguishes itself with its minimal footprint and sub‑1ms latency. Unlike commercial solutions, Portkey is open source and self‑hostable. The unified interface across 1600+ models is a unique selling point. Many enterprises now seek to avoid vendor lock‑in with multi‑model strategies.

Demand for AI model orchestration grows as companies adopt multiple LLMs. Portkey addresses the pain point of managing separate API integrations. Its Cloudflare Workers deployment option appeals to edge‑computing use cases. The project’s production traction—4 million monthly active users—signals market validation. As AI usage scales, tools like Portkey become essential infrastructure, much like Gobii for durable autonomous agents.

Advertising Section

For teams deploying AI gateways, monitoring tools like Grafana or Datadog provide visibility. Cloud providers offer managed Kubernetes services for scalable deployments. Consider pairing Portkey with a model‑cost optimization platform. Enterprise support contracts are available for mission‑critical installations. Training and consulting services can accelerate integration.

The Verdict

Portkey AI Gateway solves a real problem: AI model integration complexity. It turns 1600+ models into one consistent, reliable interface. The open‑source nature and production‑ready features make it compelling. Enterprises and developers should start with Portkey even if using one model today. The abstraction layer future‑proofs your AI infrastructure.

There is no video demo for this article.