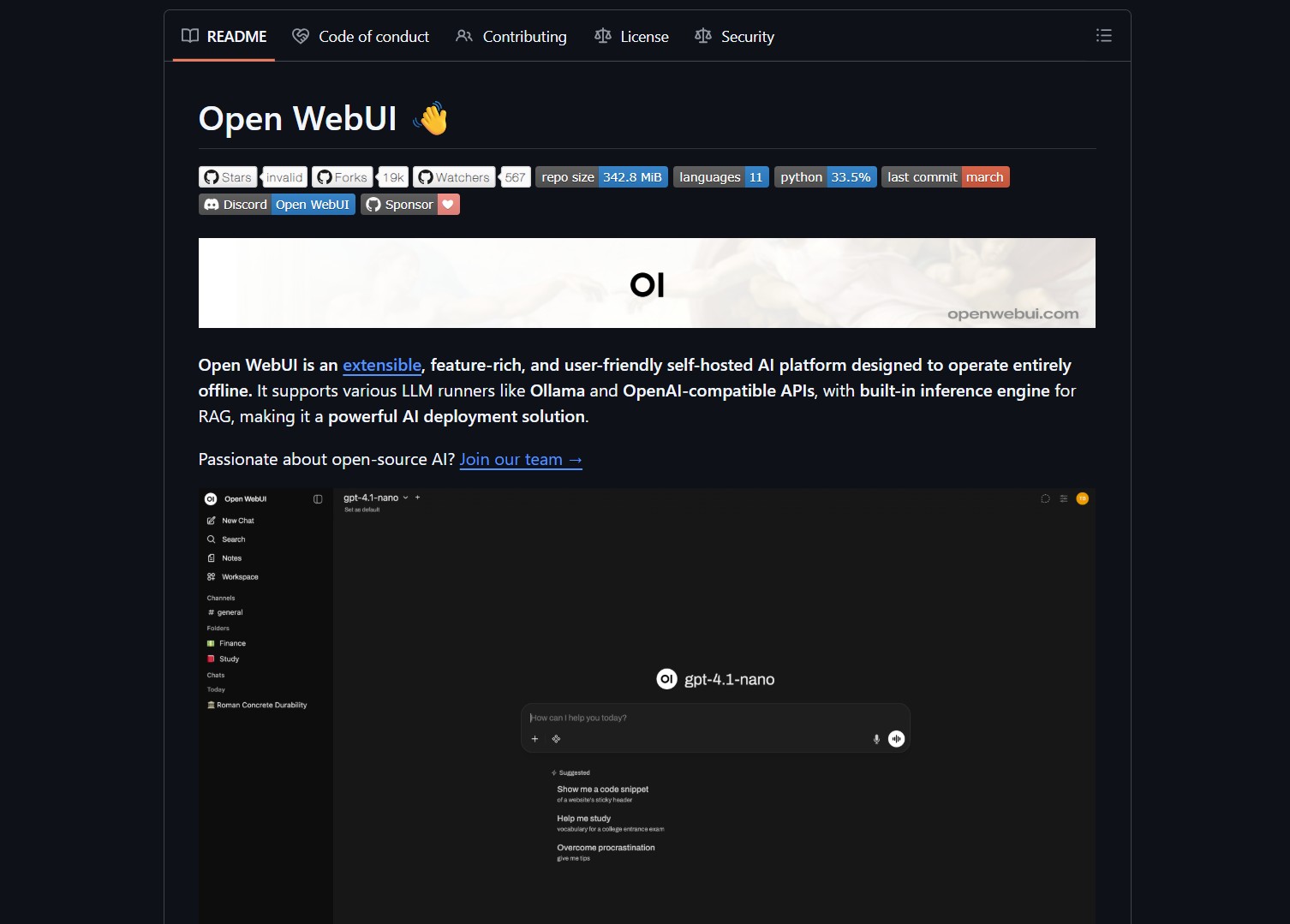

Open WebUI is an extensible self-hosted AI platform designed for privacy and offline use. It supports various large language model runners like Ollama and OpenAI-compatible APIs. This platform enables users to maintain full control over their data and infrastructure. It integrates retrieval-augmented generation (RAG) directly into the interface for improved document processing.

The platform offers a comprehensive feature set including local speech-to-text and text-to-speech capabilities. It provides built-in support for Model Context Protocol (MCP) servers to access structured data. Users can execute sandboxed Python code via a Jupyter server integration for technical tasks. It also features multi-user management with role-based access control for organizational deployments. This modular approach makes it a strong alternative to durable autonomous agents in cloud environments.

This tool is ideal for developers and privacy-conscious organizations like legal or financial firms. It serves AI enthusiasts who prefer running models locally to avoid subscription costs. Researchers use the platform to maintain reproducible environments for sensitive datasets. It is perfect for anyone needing a user-friendly frontend for local model inference.

Project link:

https://github.com/open-webui/open-webui

Deployment is most efficient through Docker containers to ensure consistency across different environments. You can launch the system with a single command to access the local web interface. Once running, you connect it to local model runners or external API endpoints. The system handles all RAG processing and vector storage internally without external dependencies. This enables developers to create systems like personal AI agents on their own hardware.

The market for self-hosted AI is growing as privacy concerns with cloud providers increase. Open WebUI competes with monolithic desktop apps by offering a multi-user web-based environment. Its extensible architecture allows it to adapt to new model types and tools quickly. This flexibility ensures it remains relevant as the AI landscape continues to evolve.

Explore more local AI tools and self-hosting guides in our comprehensive library. Our tutorials cover everything from basic setup to advanced multi-model orchestration. Stay updated with the latest open-source developments by following our deep dives.

Open WebUI is the definitive choice for those seeking a private AI command center. It combines professional features with a simple installation process that works for beginners. While it requires local hardware resources, the privacy and cost benefits are significant. It successfully bridges the gap between complex backend setups and intuitive user experiences.

- How to Build an MVP in 3 Hours with Vibe Coding Prompts

Badge Vibe Coding Prompt Template provides structured, copy-paste prompt templates that guide an idea through market research, a PRD, implementation..

- How to Build Safer Agents with Parlant Conversation Modeling

Badge Parlant promises to stop the “roll of the dice” approach by enforcing contextual guidelines that activate based on conversation..

- How to Build Interactive UIs for Agents with MCP Apps

Badge MCP Apps is an extension proposal, SEP-1865, that aims to standardize interactive user interfaces inside MCP hosts. The effort..

- How to Build AI Apps with Genkit and Firebase Studio

Badge Genkit is the open-source framework that powers Firebase Studio, and it provides SDKs for JS/TS, Go, and an alpha..

- How to Build a Local Multi-Agent Workforce with Eigent

Badge I ran into this project while evaluating desktop-first agent platforms that coordinate many workers in parallel. Eigent is a..

- How to Build RAG Apps with BRAG LangChain Notebooks

BRAG LangChain provides five notebooks that walk from basic RAG setups to advanced multi-query, routing, indexing, and reranking techniques. This..