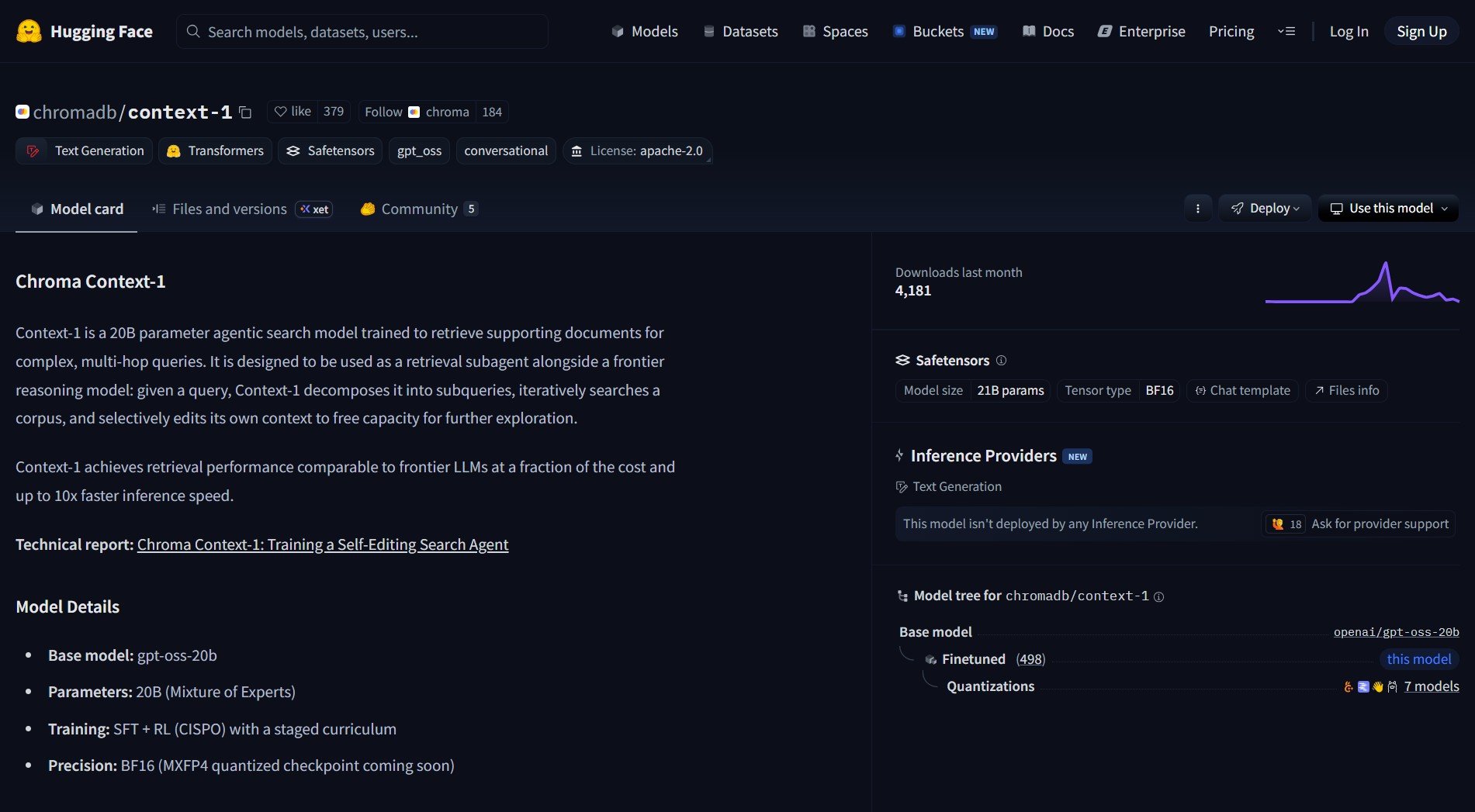

Chroma just open-sourced Context-1, a 20B parameter agentic search model designed to retrieve supporting documents for complex, multi-hop queries. The model is intended as a retrieval subagent that decomposes queries, iteratively searches a corpus, and edits its own context to free capacity for further exploration. The result is retrieval performance comparable to frontier LLMs at much lower cost and faster inference.

Context-1 runs locally and is built for high-quality, low-latency retrieval. It is aimed at developers building retrieval-augmented systems (RAG) who want instant, private search without round trips to cloud endpoints. For alternative approaches to document retrieval, check out how to sync 3D scenes across Windows with Three.js — a different take on local-first tooling.

How It Works

Context-1 decomposes a complex query into smaller subqueries, searches a local corpus for supporting documents, and selectively edits or prunes context to keep the retrieval loop efficient. This iterative decomposition and retrieval pattern helps surface evidence for multi-step questions while keeping latency low.

Key Capabilities

- Purpose — Fast, high-quality local document retrieval for RAG workflows

- Size — 20B parameters optimized for speed and footprint

- Runtime — Designed to run locally, examples show MacBook-class inference

- Pairing — Intended alongside a frontier reasoning model for best results

Pros and Cons

- Pros: Open-source and runnable locally with no cloud lock-in, competitive retrieval quality, lower inference cost and faster query turnaround

- Cons: Requires local compute with larger collections needing more RAM or CPU, best used as a retrieval subagent not a full reasoning model, deployment needs integration work

For a complementary retrieval approach, see how to transfer files securely with E2ECP P2P encryption — another tool that prioritizes privacy and local-first operation.

Try It Locally

- Visit the model page on Hugging Face: https://huggingface.co/chromadb/context-1 and read the README and examples

- Follow upstream instructions for inference — typical approaches include Hugging Face Transformers or Accelerate

- Integrate Context-1 as the retrieval step in your RAG pipeline and pair it with a stronger reasoning model for final answer synthesis

Local inference performance depends on hardware, quantization strategy, and corpus size. Validate memory and latency on representative data before adopting it for production workflows.

Project link:

https://huggingface.co/chromadb/context-1

“Running retrieval locally changes the workflow more than the benchmark does. Once search is private and instant, people stop batching questions and start using it in the middle of real work. That usually matters more than a leaderboard tie.” — @temporal_day

- How to Use VideoSOS for Browser Video Editing with 100+ AI Models

VideoSOS is an open-source, browser-first video editor that brings over 100 AI models into your browser. It handles text-to-video, image-to-video,..

- How to Use Nanobot as an Ultra-Light Personal AI Agent

I ran into this repo on GitHub and had to stop, because Nanobot is an ultra-light clawdbot-style assistant that boots..

- How to Use Zed as a High-Performance Multiplayer Code Editor

Zed is a high‑performance multiplayer code editor built by the creators of Atom and Tree‑sitter. It tackles the twin problems..

- How to Use SillyTavern as a Unified AI Interface

SillyTavern is a locally installed interface that unifies text‑generation LLMs, image‑generation engines, and TTS voice models. It consolidates numerous AI..

- How to Use Nexu to Run AI Agents Directly Inside Your Messaging Apps

Nexu is an open-source desktop client that runs AI agents inside your favorite messaging apps. It connects tools like OpenClaw..

- How to Use Google Antigravity as an Agent-First AI IDE for Coding

Google has recently released Antigravity. This is an agent-first development platform for modern developers. It uses the familiar VS Code..