PageIndex argues that similarity is not the same as relevance, and it offers a vectorless, reasoning based approach to retrieval that builds a hierarchical page index and uses LLM-driven tree search to find the most relevant sections. The claim is bold, it reports 98.7% on FinanceBench, and the repo is worth a close look if you work with complex documents.

PageIndex is designed for single or long-document retrieval scenarios where structure and reasoning matter more than nearest neighbor similarity.

What it is

PageIndex (VectifyAI) is a vectorless retrieval system for retrieval augmented generation workflows. Instead of embedding and chunking, it builds a hierarchical table of contents, then uses an LLM to reason over that index with a tree search strategy, much like how an expert would consult a long document. While Chroma Context-1 focuses on local agentic search with embeddings, PageIndex takes the opposite route by eliminating vectors entirely and relying on structured reasoning.

How it works

At a high level PageIndex performs retrieval in two steps:

- Generate a tree style table of contents from the document, splitting content into meaningful page or section nodes.

- Run a reasoning based tree search that expands promising nodes, uses the LLM to score relevance, and prunes branches to focus on the most relevant passages.

This retrieval approach is a strong alternative for developers who have tried BRAG and want a different paradigm that avoids embedding pipelines, especially for single-document scenarios with deep structural hierarchy.

# quick start

git clone https://github.com/VectifyAI/PageIndex.git

cd PageIndex

# follow README for example indexing and retrieval commands

| Feature | Notes |

|---|---|

| Vectorless retrieval | No embeddings, no vector DB, no chunking |

| Hierarchical index | Tree structure mirrors document layout and semantics |

| Reasoning search | LLM guided tree search to find relevant sections |

| Use case | Long reports, manuals, finance docs, legal briefs |

Pair PageIndex with a strong reasoning model for final answer synthesis, PageIndex focuses on relevance selection rather than answer generation.

Pros and cons

Pros

- Avoids vector DB complexity and indexing costs

- Better alignment with true relevance for long, structured documents

- Compact index, interpretable tree search, and strong single document performance

Cons

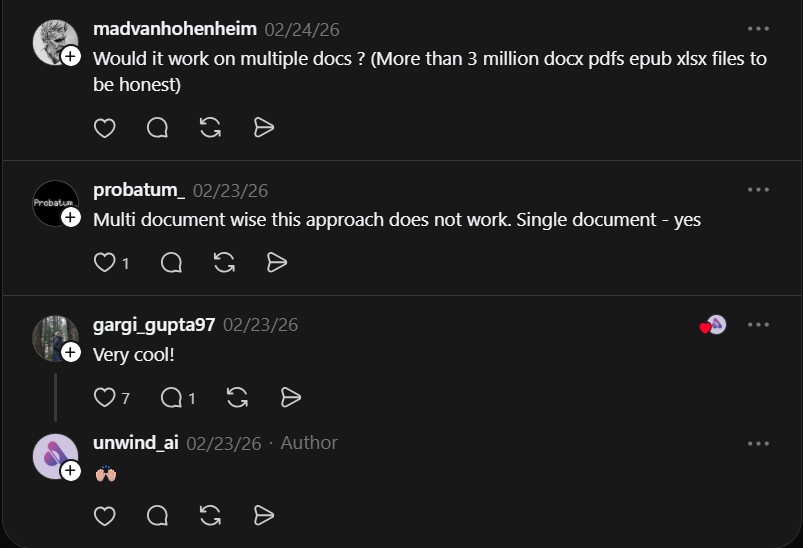

- May not scale as well for large multi document corpora without additional orchestration

- Depends on the reasoning model to score and expand nodes accurately

- Requires work to integrate into existing RAG pipelines that expect embeddings

Try it locally

- Clone the repo and read the examples to build a tree index from sample documents.

- Run the supplied retrieval scripts and compare results against a vector baseline on representative tasks.

This approach shines on single long documents, but multi document setups may need additional design. Validate on your dataset before committing to a full migration away from vector search.

Project link

Here are what people are saying:

“Multi document wise this approach does not work. Single document – yes” @probatum_

If you enjoy articles about top GitHub repositories like this, don’t forget to subscribe to Technolati.com.

- How to Use OpenWork as a Desktop Interface for DeepAgents

Badge OpenWork is an opinionated desktop interface for deepagentsjs, it exposes filesystem planning, subagent delegation, and direct tool access so..

- How to Use Lightpanda as a Fast Headless Browser for Agents

Badge Lightpanda Browser is a headless browser written from scratch in Zig, built specifically for AI agents and automation workflows…

- How to Use Activepieces for No-Code AI Agent Automation

Activepieces is an open source alternative to Zapier that provides a visual canvas to build AI agent workflows and exposes..

- How to Use Chroma Context-1 for Local Agentic Search

Chroma just open-sourced Context-1, a 20B parameter agentic search model designed to retrieve supporting documents for complex, multi-hop queries. The..

- How to Use VideoSOS for Browser Video Editing with 100+ AI Models

VideoSOS is an open-source, browser-first video editor that brings over 100 AI models into your browser. It handles text-to-video, image-to-video,..

- How to Use Nanobot as an Ultra-Light Personal AI Agent

I ran into this repo on GitHub and had to stop, because Nanobot is an ultra-light clawdbot-style assistant that boots..