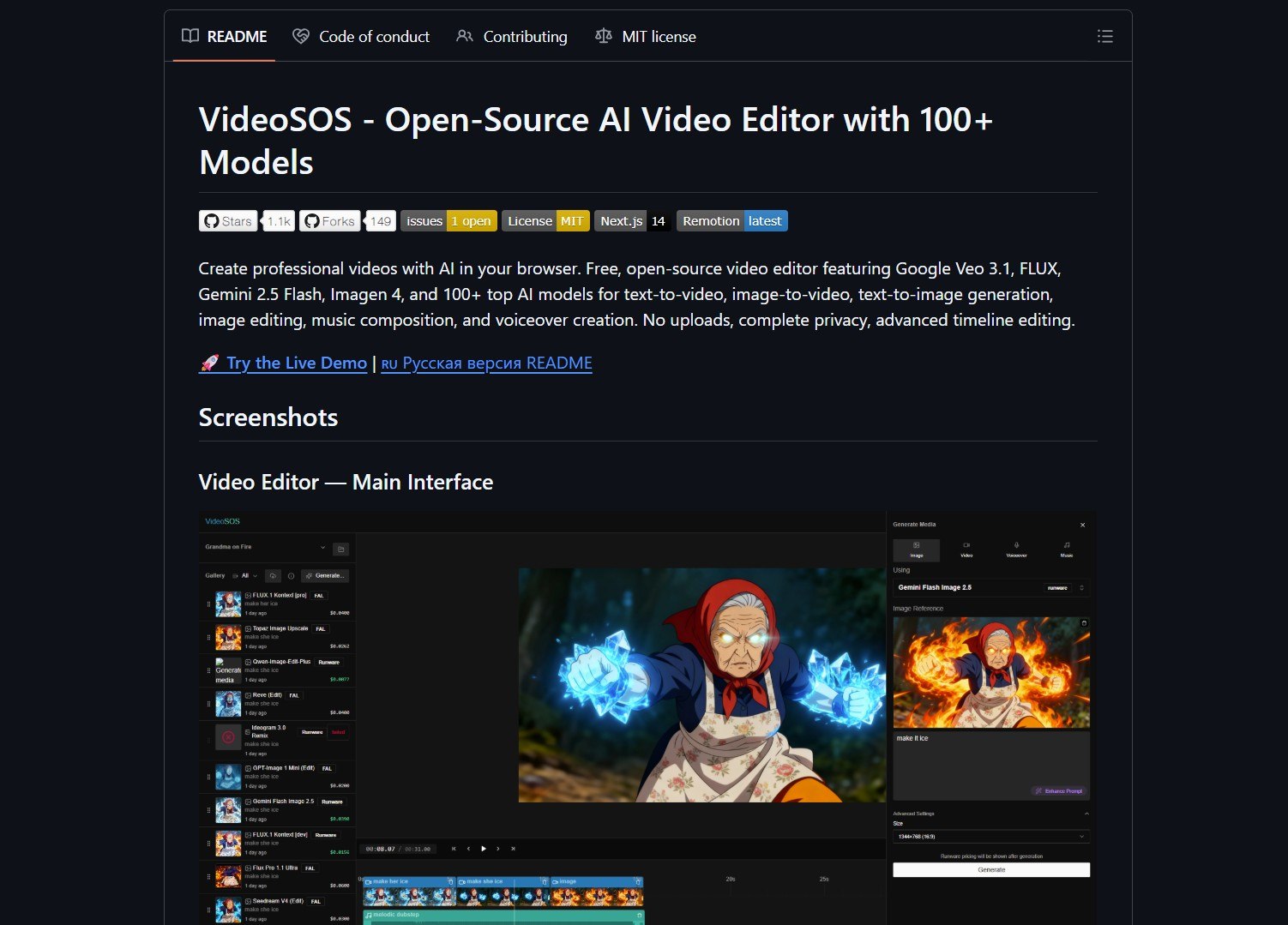

VideoSOS is an open-source, browser-first video editor that brings over 100 AI models into your browser. It handles text-to-video, image-to-video, image editing, music composition, and voiceover creation without uploading media to the cloud. The repo bundles integrations with fal.ai and Runware.ai and supports models like Google Veo 3.1, Gemini 2.5 Flash, and Imagen 4.

The stated goal is zero uploads and complete privacy by running as much as possible in the client or via local runtimes. You can generate short clips from prompts, edit frames, create voiceovers, and assemble multi-track projects on a standard timeline. This makes it a strong option for privacy-conscious content creation and rapid video prototyping.

Key Features

VideoSOS offers broad model coverage for video, image, and audio tasks. Its timeline editor supports standard NLE workflows for assembling and polishing multi-track projects. The local-first promise means no uploads, subject to which models actually run on your hardware.

It supports text-to-video and image-to-video generation, image editing, text-to-speech with multiple synthesis engines, and music composition. You can also build and scale AI agents for broader automation workflows using a self-hosted offline AI platform alongside your media tools.

Project Link

Project link:

https://github.com/timoncool/videosos

How It Works

VideoSOS wires together model runtimes, a web UI timeline editor, and integration adapters to switch providers. Where local runtimes are not possible, the project provides provider adapters for fal.ai and Runware.ai so generation can still happen without user-managed servers. This is similar to how you can route 1600 AI models with a unified gateway for flexible provider switching.

Start with a single generation pipeline on a local machine with known GPU or CPU capability before attempting a full 100-model experiment. The quick start is straightforward: clone the repo, install local runtimes or configure provider adapters, and test a text-to-image-to-video pipeline.

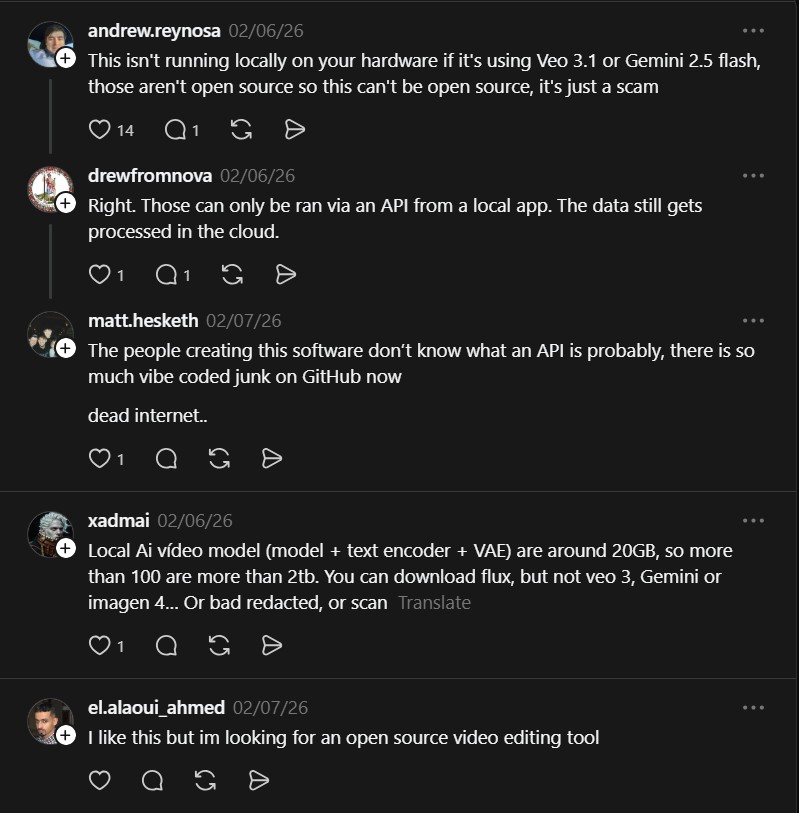

The Catch

Claims about running proprietary models locally should be validated. Many named models like Gemini and Imagen are not fully open-source or available for local execution without licensing. Large model claims are resource intensive and sometimes conflate remote provider integrations with true local execution. Verify which models the repo runs locally and respect licensing for proprietary models. Evaluate GPU or VRAM requirements and disk capacity before experimenting.

VideoSOS is compelling as a distribution idea: a browser editor that unifies many media models under a single timeline workflow. The practical limits are hardware and model licensing, so validate runtimes and start with a constrained pilot.

- How to Use Nanobot as an Ultra-Light Personal AI Agent

I ran into this repo on GitHub and had to stop, because Nanobot is an ultra-light clawdbot-style assistant that boots..

- How to Use Zed as a High-Performance Multiplayer Code Editor

Zed is a high‑performance multiplayer code editor built by the creators of Atom and Tree‑sitter. It tackles the twin problems..

- How to Use SillyTavern as a Unified AI Interface

SillyTavern is a locally installed interface that unifies text‑generation LLMs, image‑generation engines, and TTS voice models. It consolidates numerous AI..

- How to Use Nexu to Run AI Agents Directly Inside Your Messaging Apps

Nexu is an open-source desktop client that runs AI agents inside your favorite messaging apps. It connects tools like OpenClaw..

- How to Use Google Antigravity as an Agent-First AI IDE for Coding

Google has recently released Antigravity. This is an agent-first development platform for modern developers. It uses the familiar VS Code..

- How to Use Kilocode for AI-Powered Coding Assistance

Kilocode is an all-in-one agentic engineering platform for faster coding workflows. It combines code generation, automation, and debugging into a..