Parlant promises to stop the “roll of the dice” approach by enforcing contextual guidelines that activate based on conversation state, keeping LLMs grounded and consistent for regulated workflows.

Parlant focuses on Conversation Modeling, where modular, natural language guidelines are matched to the current context and enforced, instead of relying on brittle, long system prompts.

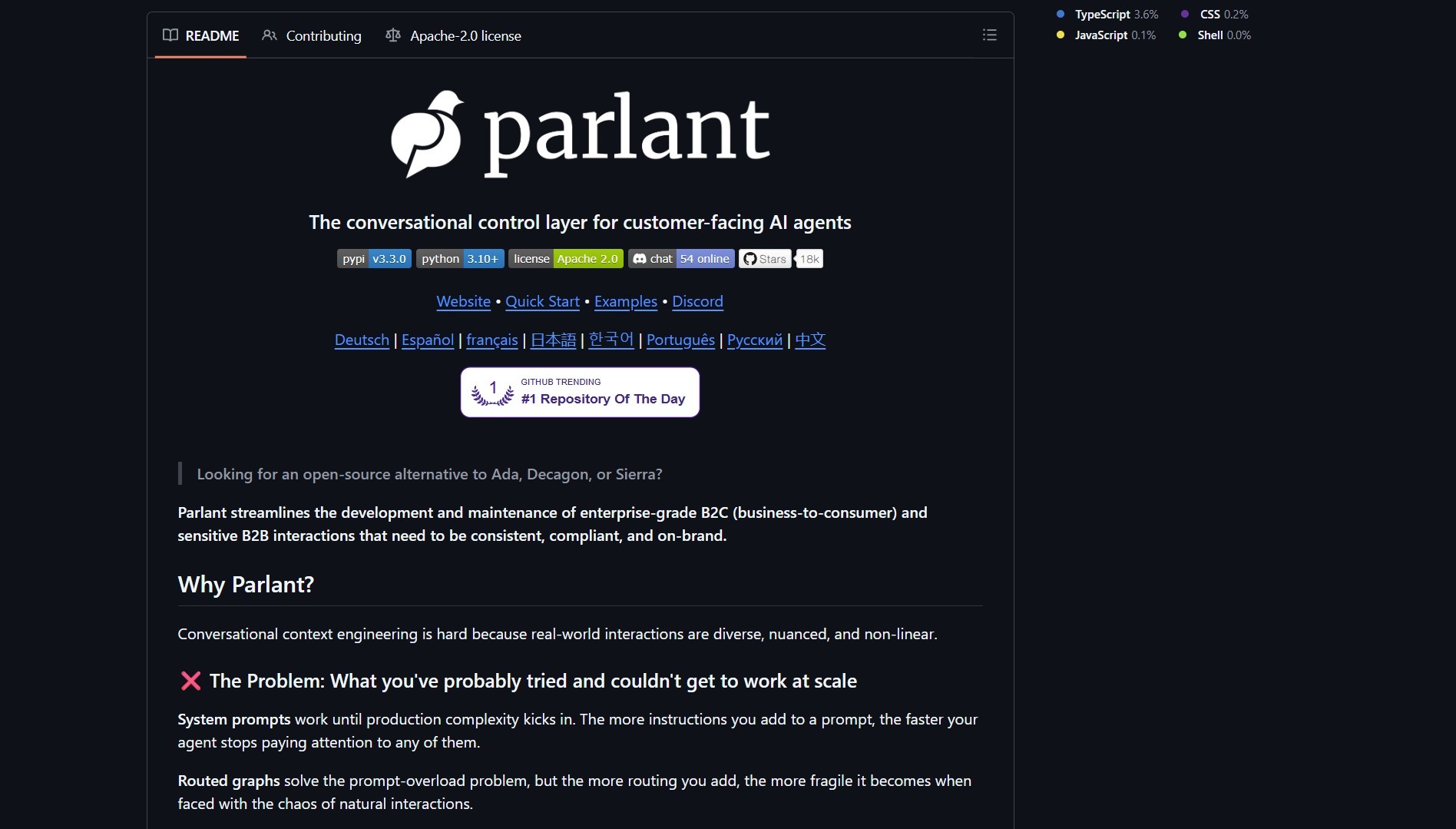

What it is

Parlant is an open source Python framework that helps teams build production safe agents by defining contextual guidelines, usage rules for tools, and utterance templates to prevent hallucinations. Unlike Eigent, which focuses on orchestrating a local multi-agent workforce, Parlant concentrates on grounding individual agent behavior through human-readable rule modules. The engine matches relevant guidelines to each interaction so the model follows rules, while preserving a natural conversational style.

How it works

At a high level, Parlant provides:

- Guideline modules, written in plain language, that describe expected behavior and constraints.

- A matcher that selects active guidelines based on conversation state.

- Tool wrappers with enforced usage rules, and utterance templates that limit risky outputs.

This structured approach to agent safety complements runtime-level protections offered by tools like NVIDIA NemoClaw, making Parlant useful at the behavior layer rather than the infrastructure layer.

# quick install example

pip install parlant

# or run the repo locally

git clone https://github.com/emcie-co/parlant.git

cd parlant

# follow README for examples and runtime

| Feature | Notes |

|---|---|

| Conversation Modeling | Contextual guidelines replace long system prompts |

| Tool integration | Built in wrappers with enforceable usage policies |

| Templates | Utterance templates reduce hallucination risk |

| Use case | Customer support, finance, healthcare, legal workflows |

Start by authoring a small set of guidelines for a single workflow, then run the agent against guarded test conversations to validate rule activation and compliance.

Pros and cons

Pros

- Stronger compliance, better consistency across conversations

- Modular rules are auditable and editable by humans

- Integrates with common LLM providers and toolchains

Cons

- Requires careful guideline authoring and testing

- Mis-specified rules can overconstrain agents, reducing flexibility

- Production integration needs monitoring and human in the loop for edge cases

Parlant gives agents behavior rules and tool access, but you still must validate guidelines, monitor runtime behavior, and sandbox tool effects before deploying to real users.

Try it locally

- Clone the repo and run the examples in a sandboxed environment.

- Write a few guideline modules, simulate conversations, and inspect which rules activate.

- Integrate tool wrappers gradually, and keep human approvals for high risk actions.

Project link

Here are what peoples are saying:

“Open source and stable.” @_saransh_saboo

“Parlant team has done an amazing work on this…it’s very good” @saboo_shubham_

“Super interesting approach!” @gargi_gupta97

If you enjoy articles about top GitHub repositories like this, don’t forget to subscribe to Technolati.com.

- How to Build an MVP in 3 Hours with Vibe Coding Prompts

Badge Vibe Coding Prompt Template provides structured, copy-paste prompt templates that guide an idea through market research, a PRD, implementation..

- How to Build Interactive UIs for Agents with MCP Apps

Badge MCP Apps is an extension proposal, SEP-1865, that aims to standardize interactive user interfaces inside MCP hosts. The effort..

- How to Build AI Apps with Genkit and Firebase Studio

Badge Genkit is the open-source framework that powers Firebase Studio, and it provides SDKs for JS/TS, Go, and an alpha..

- How to Build a Local Multi-Agent Workforce with Eigent

Badge I ran into this project while evaluating desktop-first agent platforms that coordinate many workers in parallel. Eigent is a..

- How to Build RAG Apps with BRAG LangChain Notebooks

BRAG LangChain provides five notebooks that walk from basic RAG setups to advanced multi-query, routing, indexing, and reranking techniques. This..

- How to Build and Scale AI Agents with OpenHands Development Platform

OpenHands is a community-driven development platform for building and scaling AI agents. It provides a unified ecosystem that includes a..